Today is a very special day for me. I've decided to leave my full-time job at Klarna and dedicate myself to building Prompt Studio and hopefully become part of something we are all witnessing at the moment, this amazing push towards AI becoming a greater part of our daily lives. Until now I could only invest a few hours of my free time every day, a compromise that left me day dreaming of what Prompt Studio could be and left me unhappy with the progress I was making. Today is my first day working full-time on Prompt Studio and I know it is the start of an exciting journey. So what do we want Prompt Studio to become?

Prompt Engineering and Reasoning Engines

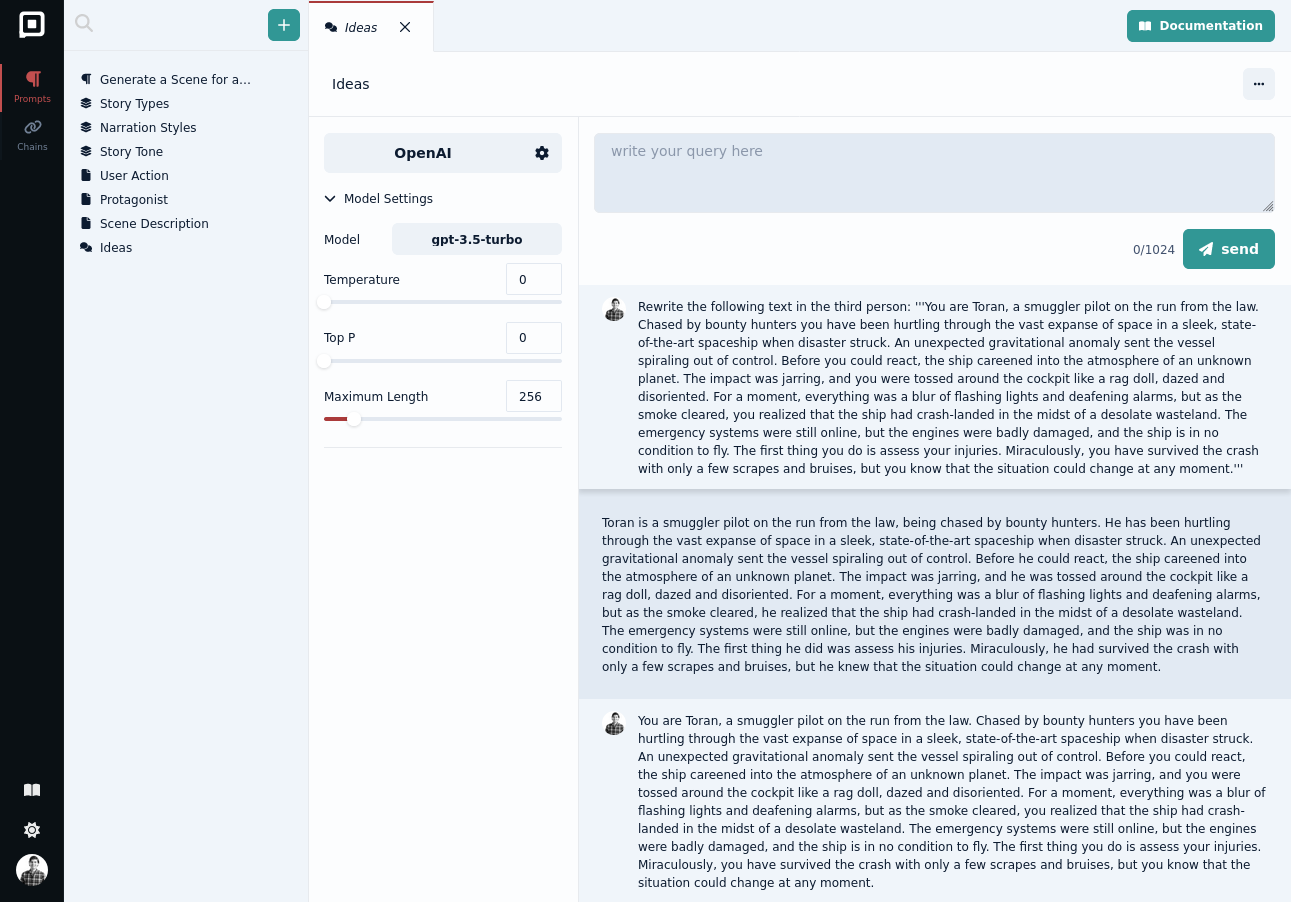

Language models are obviously great at processing language, from translations to text generation. But what excites me the most about them, is their ability to reason about things. If you haven't seen it yet, this presentation by Andrej Karpathy provides a comprehensive overview of the topic. If we draw a parallel between a language model and the ways we think then a pretrained model is somewhat akin to system 1 thinking, it is fast and automatic.

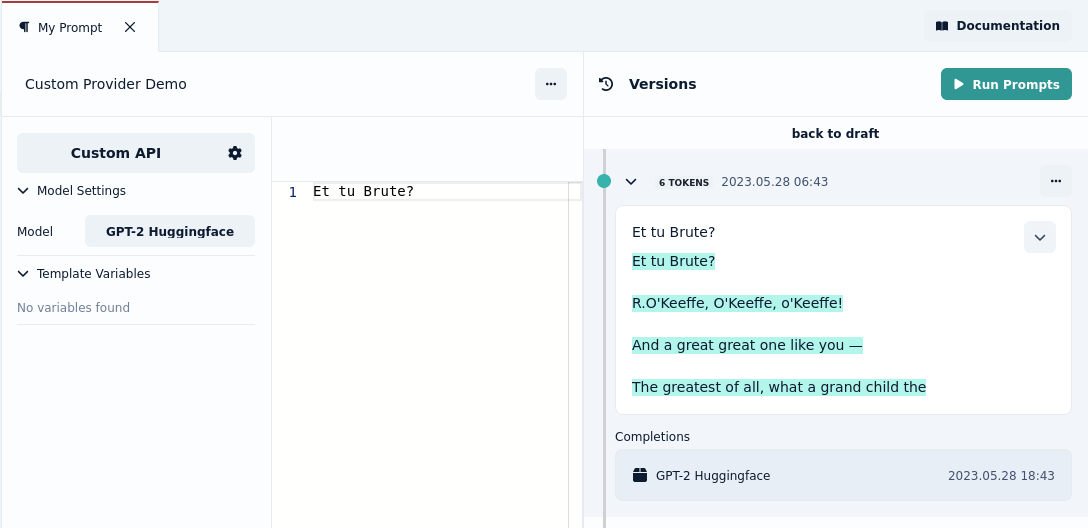

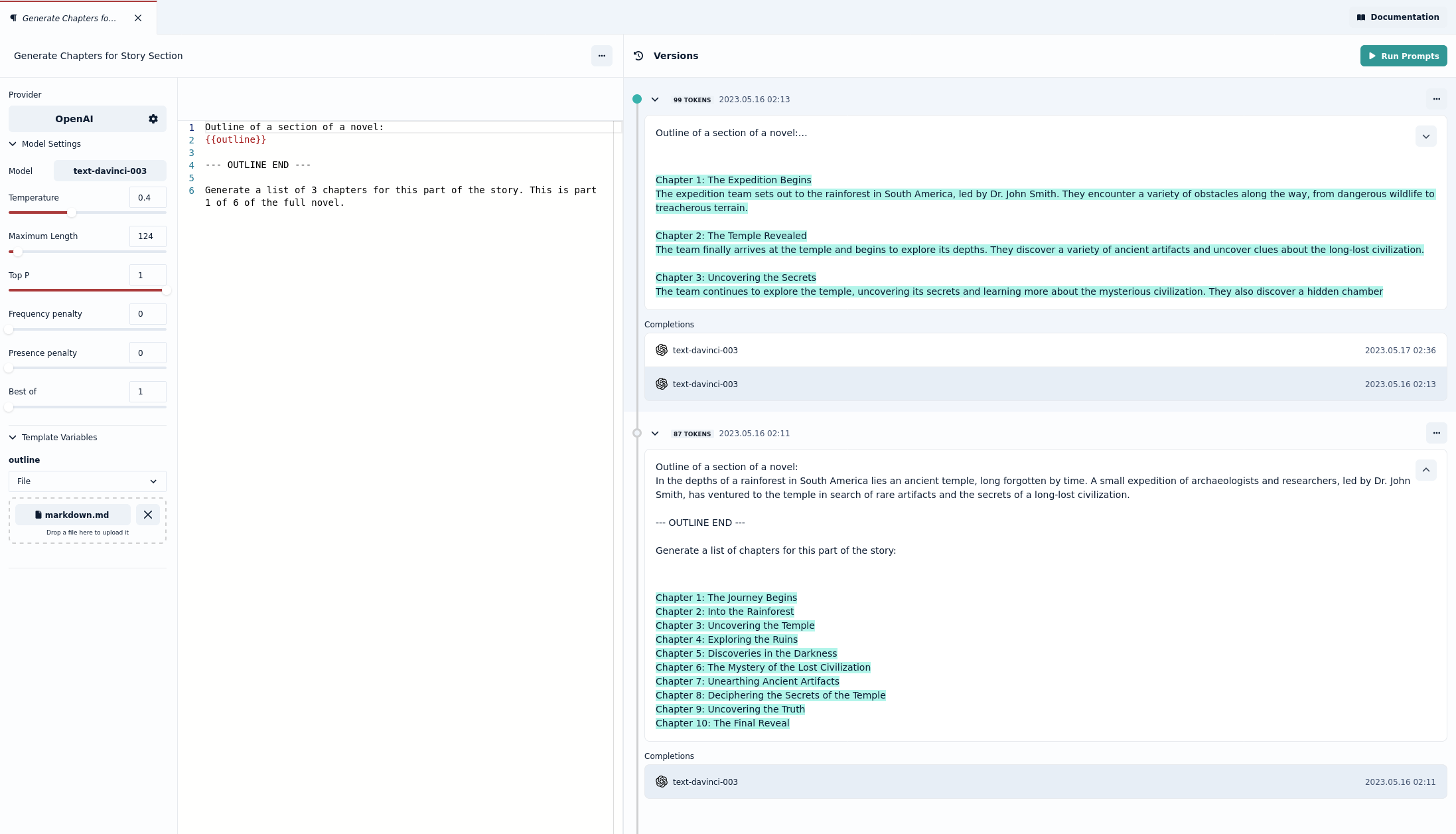

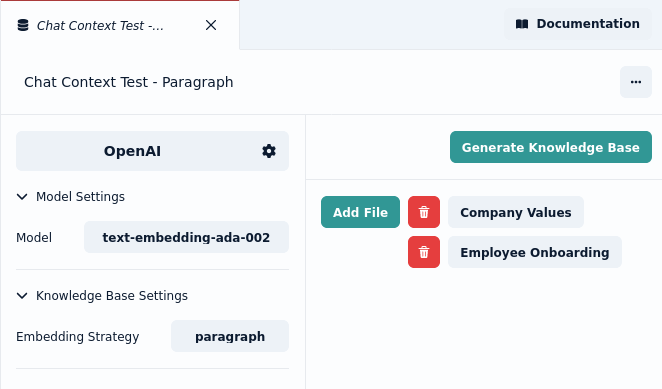

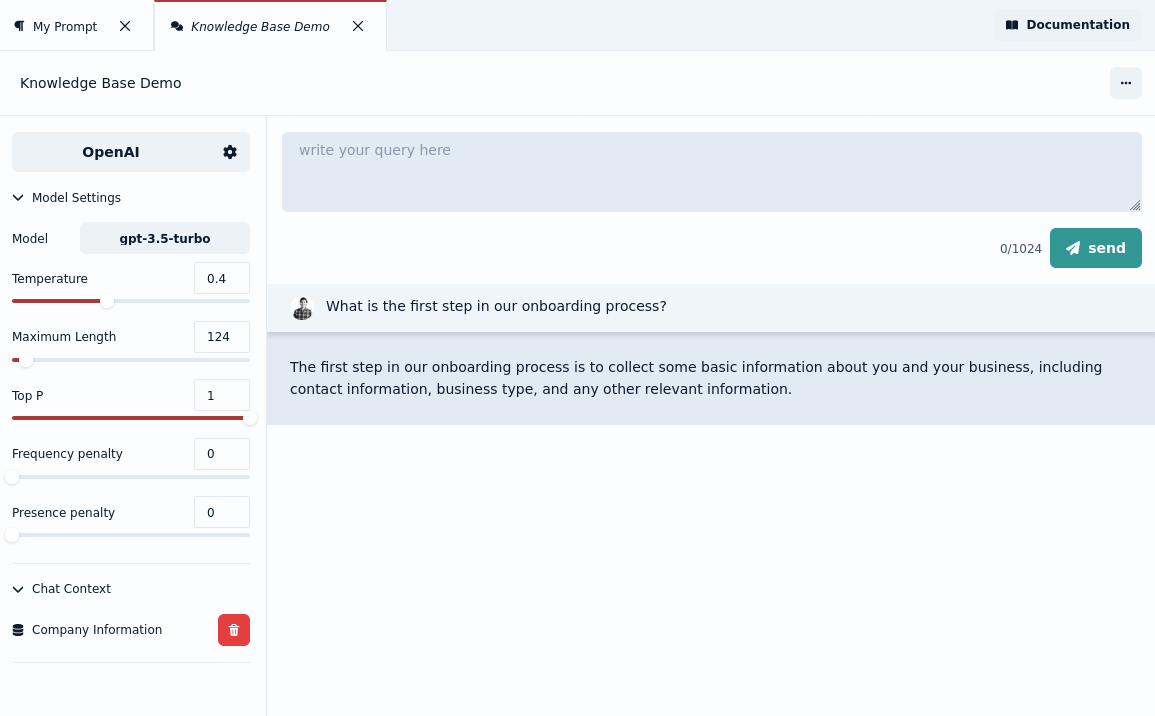

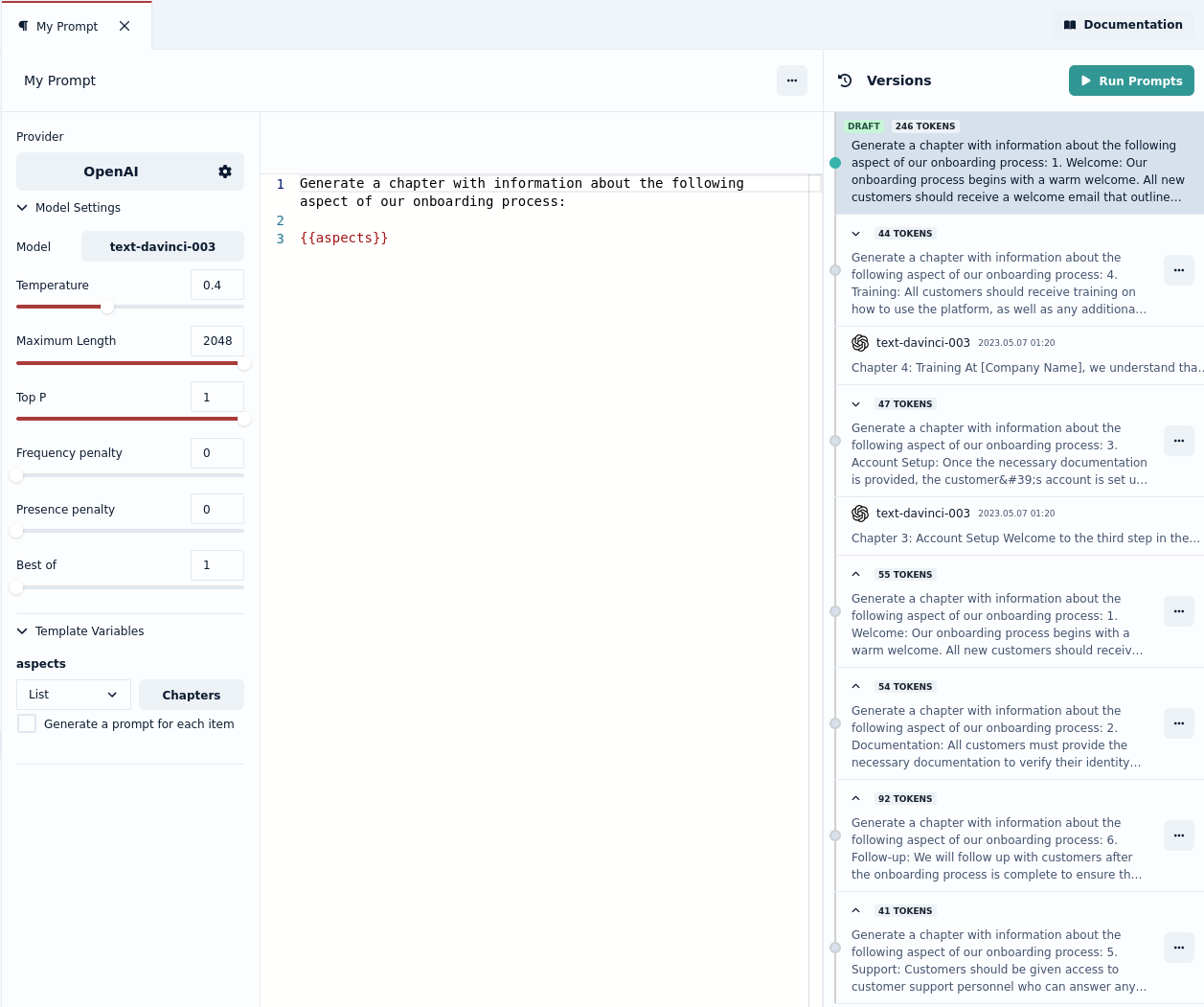

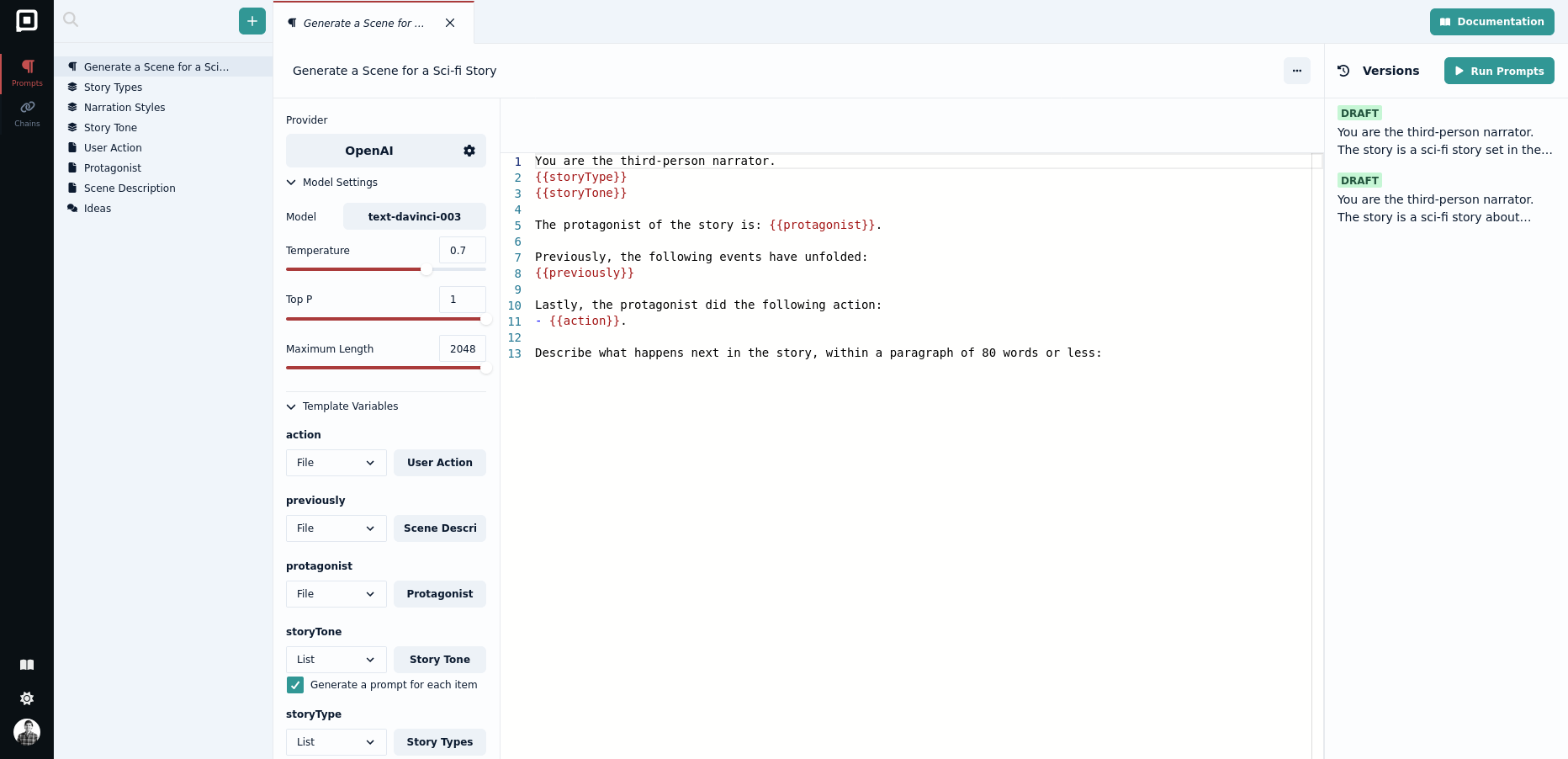

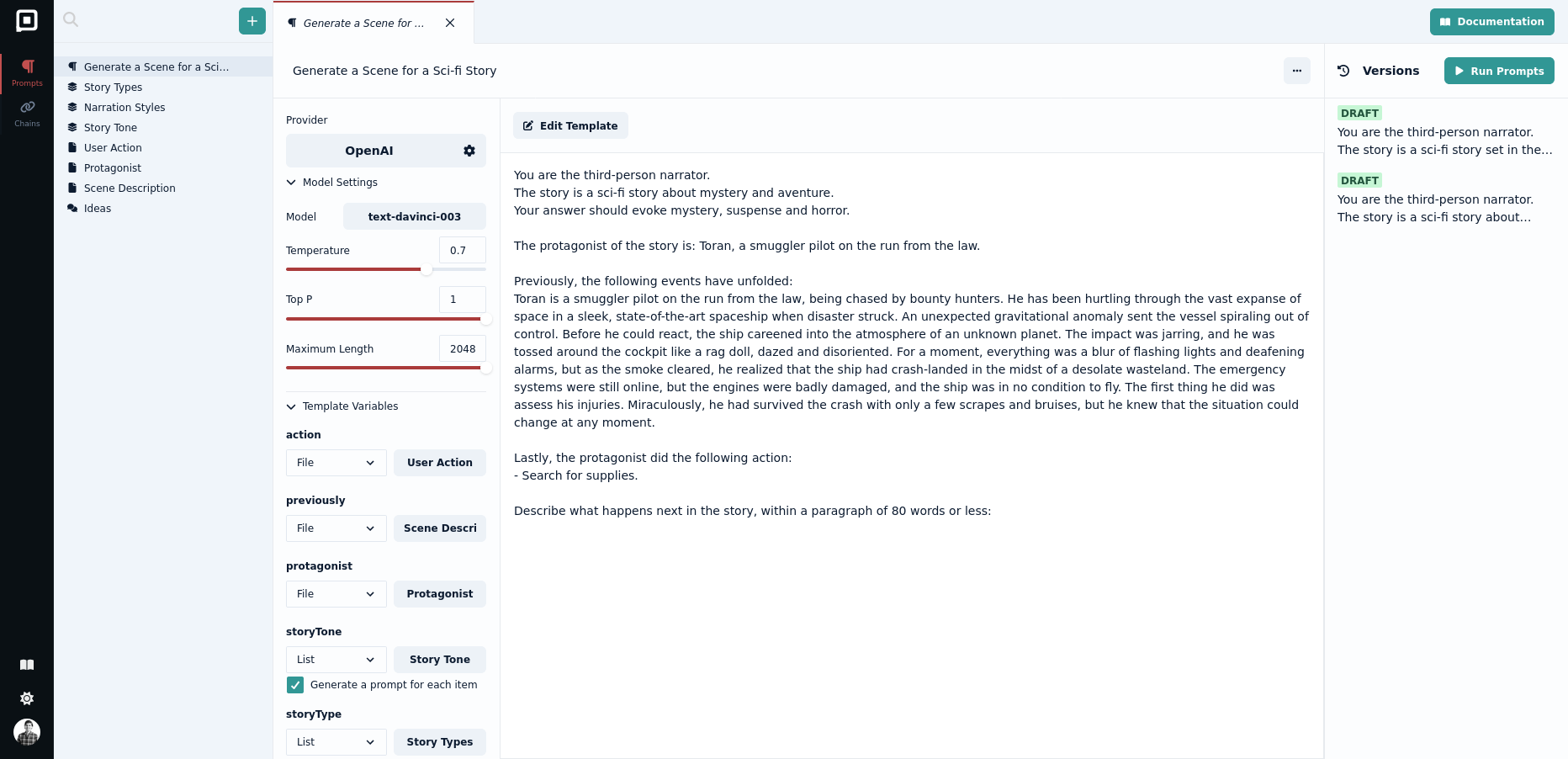

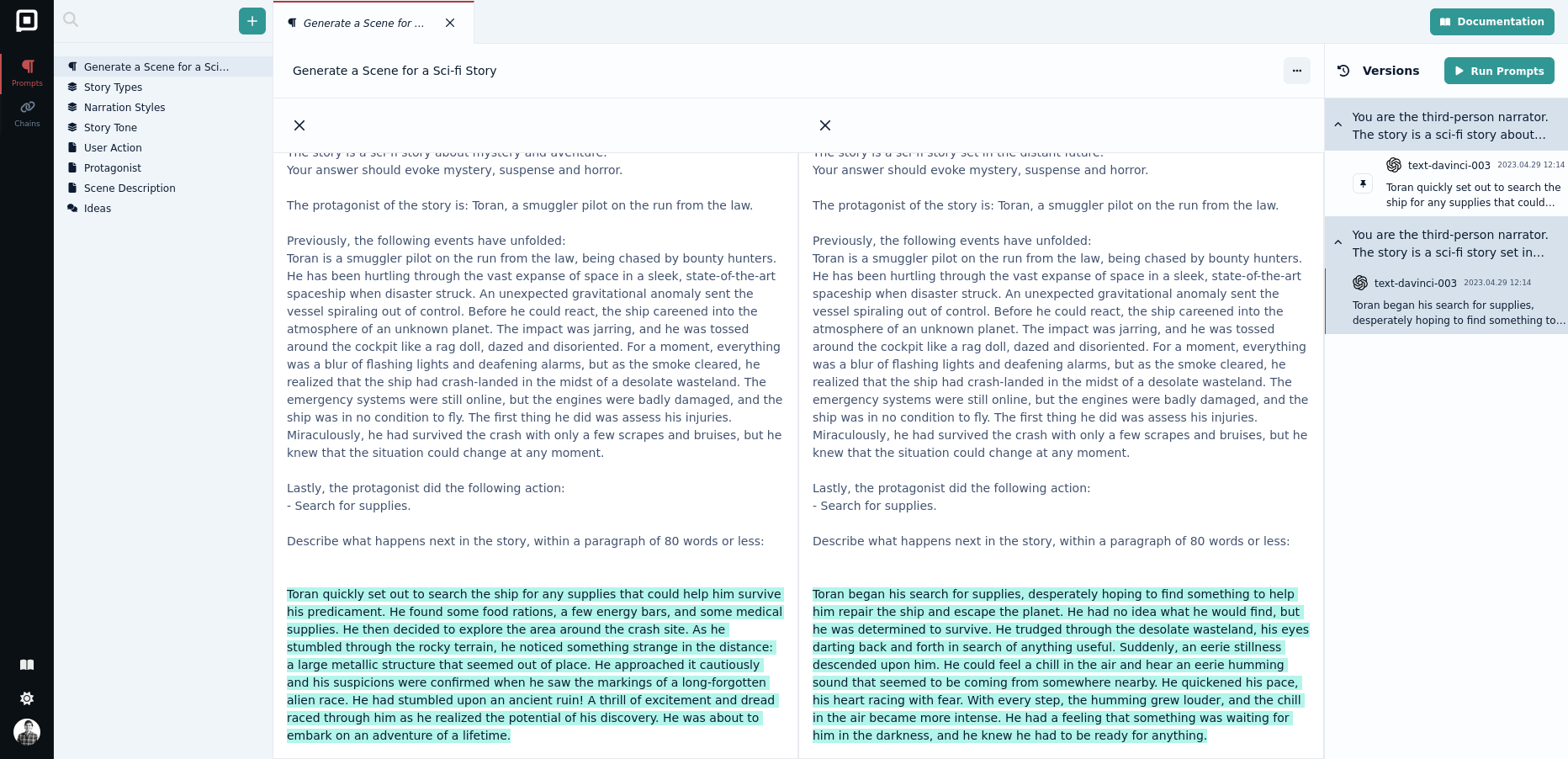

With Prompt Engineering we add another layer, a more deliberate and targeted approach to get the results we want. We are seeing many novel approaches emerging, from frameworks like Tree of Thought and prompting languages like Guidance. We want Prompt Studio to not only be the place where you build and track text inputs for language models but also where you can build and share these more complex processes that form system 2 thinking on top of language models.

More than a Collection of Libraries

Prompt Engineering will become a lot more mainstream and people working with prompts will come from a variety of backgrounds. With the current shortage of developers, and a continuous need for software, we think most prompt engineers will come from other domains. This is why we want Prompt Studio to bridge the gap between a tool that is only useful for software engineers and a tool that can be used by everyone. Our main focus needs to be its usability and collaboration features.

Becoming Open Core

We cannot keep up with the enormous strides in the development of AI we have seen in the past months on our own. This is why we want to focus our efforts on the aspects of prompt engineering where our expertise matters the most. We want to provide a layer for collaboration and real time editing/tools that is much needed for large organizations while providing an open source version of Prompt Studio that can be adapted and modified by anyone for their own needs. This way the editor will always be free and open source, with a layer of additional functionality for paying customers that will allow us to dedicate our time in making Prompt Studio better.

Thank you for your support and stay tuned for more updates!